Mention data, and thoughts may turn immediately to the insatiable data needs of artificial intelligence. But AI and large language models are just one of the forces driving the need for — and generating — large volumes of data. Consider, for instance, the broad field of materials science.

“At the end of the day, it’s the data that matters,” says UC Santa Barbara professor Tresa Pollock. She and McLean Echlin, a research scientist in her lab, know too well that progress in their own area of study and many others depends on instruments and experiments that generate enormous amounts of multimodal data, all of which has to be moved, stored, and retrieved over various time periods. The capability to merge large amounts of data from many different sources is at the heart of new discoveries across many fields.

Facing the recent data explosion ignited by increasingly sophisticated scientific instrumentation across the UCSB campus, Pollock, the Alcoa Distinguished Professor of Materials and then the interim dean of the UCSB College of Engineering (COE), successfully applied for a $250,000 grant from The Hearst Foundation. It enabled the purchase of state-of-the art hardware to accelerate data acquisition, transfer, and analysis and to dramatically enhance storage capacity. That allowed many more graduate and undergraduate students to engage in research in this important emerging area, serving a Hearst Foundation goal of “preparing students to thrive in a global society.” The grant was also instrumental in UCSB’s ability to secure a $5 million NSF grant in 2025 to further advance the university’s Multimodal Imaging initiative.

“One of the foundational capabilities of the Materials Department is the continuously evolving suite of very high-end materials-characterization instruments, including electron microscopes and computed tomography (cT) machines, which now routinely generate large volumes of 3D and 4D (time dependent) data,” Pollock reports. Attracting some five hundred total facility users from departments across campus, she adds, “The instruments in the Materials Department facilities alone can generate tens of terabytes [one thousand gigabytes] worth of data in a few hours of operation.”

“The typical Google account on campus might accept gigabytes (GB) of data, so cloud computing can serve many research needs,” Echlin adds. “But we can easily generate that by imaging a single, one-micron-thick laser-sectioned slice of a material [during a 3D tomography measurement]. Such huge amounts of data require a bespoke way of dealing with it. The latencies involved in sending and retrieving so much data to and from the cloud — which can be many days — are too long. The cloud can only do so much. In the system made possible by the Hearst grant, we're talking about much faster ethernet connectivity to large storage pools, where no cloud is necessary.”

Data moves so rapidly that speed might not seem an issue, but, when many billions of data bits are being sent or retrieved, latencies lasting milliseconds add up fast. Thus, the closer the site where data is generated is to where it is stored and processed, the better.

Google Says “Bye”

So, how do researchers know when they have too much data and a new approach is needed? One sign, Pollock says, is that Google “kicks you out of the cloud. That’s what they did to us. We had a month to shrink our data or move it all somewhere else.”

In giving data-driven researchers the boot in fall 2023, Google identified some “top offenders,” Pollock continues. “We were among them, although I took that as a bit of a point of pride. Echlin was required to reduce his cloud data storage by ninety-five percent.”

Materials professor Daniel Gianola was also flagged as a data “culprit.”

“Our group, in collaboration with several others on campus, performs advanced electron microscopy and diffraction to study structure-property relationships in metallic and ceramic materials used in extreme environments,” Gianola explains. “These instruments are equipped with ultrafast state-of-the-art cameras that are sensitive down to single-electron events and can generate upwards of a terabyte of data per minute, which then must be processed efficiently to decipher the finest details of our materials. The cameras, several of which are located in the core Microscopy and Microanalysis Facility (MMF) on campus, have made the handling of data a central Google-cloud challenge representing a moment of deep data truth.”

“The exodus from Google meant that we had to start placing things proximal to each other [to gain the above-mentioned speed advantage],” Echlin says, adding that previous efforts on campus aimed at placing data creation, processing, and storage close to each other had been “mostly serendipitous.”

Echlin had for some time been seeking to optimize use of the High Performance Computing Center (HPC), which is part of the UCSB Center for Research Computing (CRC) in Elings Hall — also the site of the microscopy suite. “Having the infrastructure for connectivity in one building rather than spread across campus, unifies a lot,” Echlin says. “Connecting those entities was an essential step that gave us places to put data archivally and also to literally stream the data directly from an instrument to storage as we're generating it.”

“Prior to receiving the Hearst grant, users were at the mercy of slow data rates to store, process, and archive data from those instruments, and they always struggled to have effective data backup and archiving options,” Gianola adds.

“The grant enables streamlined workflows across the data ecosystem. I believe that this system will serve as a model for other shared experimental facilities around the country,” Pollock adds, “Faculty like Dan Gianola, who in March received the 2026 Brimacombe Medal from The Minerals, Metals & Materials Society for outstanding mid-career scientists, will need these foundational data capabilities to continue to lead the field.”

As a result of the grant, Echlin says, “At least temporarily, the campus data needs are under control. We have a decently large buffer between how much storage we have and how much we need, but we’ll need more in a couple of years.”

System hardware like that installed in the HPC is only as valuable as the technical support provided to maintain it and ensure the integrity of, and access to, data, which HPC provides. “They lease us some real estate and give us some real estate, where we can put some of the servers, and they help us maintain them,” Echlin says. “Support also comes from GRIT (General Research Information Technology), which has the focus of ensuring that the needs of researchers and scientists are represented on campus.”

A duplicate data setup has been placed in the Chemistry Building and mainly serves the cryo-electron microscope, which also generates vast volumes of data. Dorit Hanein, a faculty member in the Departments of Bioengineering as well as Chemistry & Biochemistry, manages the facility.

Campus Archival Resources

A key element of improving long-term data storage for all research efforts on campus, derives from a very old technology that has been improved and now occupies a new data-storage niche. Says Pollock, “For archival data, we're moving to high-density tape.”

“Tape is associated with the birth of the computer age, but the densities have gone up, so that, even though it’s slower, a lot of data centers use it to store infrequently accessed data,” Echlin adds. “In our scenario, it will be used for data that was made long enough ago that it probably can go on a shelf, and if somebody really needs it, then they can get it out of that deep storage; they just won’t have immediate access.”

The result of the collaboration involving the UCSB Research Office and Hearst resources, an archival tape system has been established as a service to all researchers on campus to ensure long-term access to their data.

The Future of Data

In terms of data’s future, Pollock says, “I don't see any let up; there are valuable discoveries to be made as we develop algorithms to learn from large, multimodal datasets and understand their complexities. We can only make these discoveries if we have the large datasets at our disposal. Looking further ahead, she adds, “As a field, we want a large, up-to-date digital library of materials that we can use to discover, design, and manufacture better materials for the future.” There will undoubtedly be developments in AI that enable us to operate on the data and accelerate the cycle for designing new materials. We are just at the beginning of what is an exciting time to be a materials scientist. The first step, though, is to ensure that the data lives somewhere near where it is generated, and we are grateful to the Hearst Foundation for making that possible.”

By securing the grant, lead PI

By securing the grant, lead PI

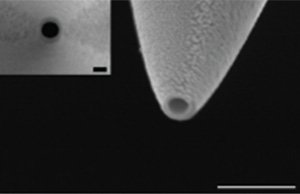

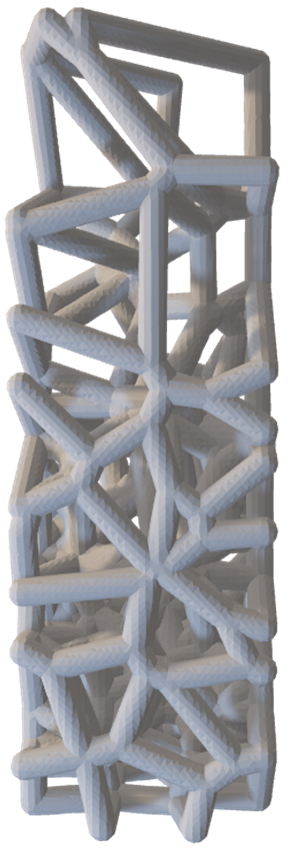

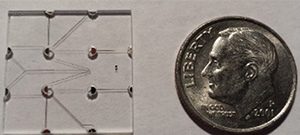

A central goal is translating the physics of such processes into functional devices, such as biosensors, passive flow-control systems, and implantable therapeutics that regulate themselves without external actuation. 3D printing enables rapid iteration on device architectures that would otherwise require weeks of cleanroom fabrication, accelerating the path from physical insight to working prototype. The photograph above shows a nanofluidic channel with integrated electrodes, with a dime for scale.

A central goal is translating the physics of such processes into functional devices, such as biosensors, passive flow-control systems, and implantable therapeutics that regulate themselves without external actuation. 3D printing enables rapid iteration on device architectures that would otherwise require weeks of cleanroom fabrication, accelerating the path from physical insight to working prototype. The photograph above shows a nanofluidic channel with integrated electrodes, with a dime for scale.